AI Memory and Conversation Management Framework - Simple as mem0, Powerful as MemU

Project description

memU is a memory framework built for 24/7 proactive agents. It is designed for long-running use and greatly reduces the LLM token cost of keeping agents always online, making always-on, evolving agents practical in production systems. memU continuously captures and understands user intent. Even without a command, the agent can tell what you are about to do and act on it by itself.

🤖 OpenClaw (Moltbot, Clawdbot) Alternative

memU Bot — Now open source. The enterprise-ready OpenClaw. Your proactive AI assistant that remembers everything.

- Download-and-use and simple to get started (one-click install, < 3 min).

- Builds long-term memory to understand user intent and act proactively (24/7).

- Cuts LLM token cost with smaller context (~1/10 of comparable usage).

Try now: memu.bot · Source: memUBot on GitHub

🗃️ Memory as File System, File System as Memory

memU treats memory like a file system—structured, hierarchical, and instantly accessible.

| File System | memU Memory |

|---|---|

| 📁 Folders | 🏷️ Categories (auto-organized topics) |

| 📄 Files | 🧠 Memory Items (extracted facts, preferences, skills) |

| 🔗 Symlinks | 🔄 Cross-references (related memories linked) |

| 📂 Mount points | 📥 Resources (conversations, documents, images) |

Why this matters:

- Navigate memories like browsing directories—drill down from broad categories to specific facts

- Mount new knowledge instantly—conversations and documents become queryable memory

- Cross-link everything—memories reference each other, building a connected knowledge graph

- Persistent & portable—export, backup, and transfer memory like files

memory/

├── preferences/

│ ├── communication_style.md

│ └── topic_interests.md

├── relationships/

│ ├── contacts/

│ └── interaction_history/

├── knowledge/

│ ├── domain_expertise/

│ └── learned_skills/

└── context/

├── recent_conversations/

└── pending_tasks/

Just as a file system turns raw bytes into organized data, memU transforms raw interactions into structured, searchable, proactive intelligence.

⭐️ Star the repository

If you find memU useful or interesting, a GitHub Star ⭐️ would be greatly appreciated.

If you find memU useful or interesting, a GitHub Star ⭐️ would be greatly appreciated.

✨ Core Features

| Capability | Description |

|---|---|

| 🤖 24/7 Proactive Agent | Always-on memory agent that works continuously in the background—never sleeps, never forgets |

| 🎯 User Intention Capture | Understands and remembers user goals, preferences, and context across sessions automatically |

| 💰 Cost Efficient | Reduces long-running token costs by caching insights and avoiding redundant LLM calls |

🔄 How Proactive Memory Works

cd examples/proactive

python proactive.py

Proactive Memory Lifecycle

┌──────────────────────────────────────────────────────────────────────────────────────────────────┐

│ USER QUERY │

└──────────────────────────────────────────────────────────────────────────────────────────────────┘

│ │

▼ ▼

┌────────────────────────────────────────┐ ┌────────────────────────────────────────────────┐

│ 🤖 MAIN AGENT │ │ 🧠 MEMU BOT │

│ │ │ │

│ Handle user queries & execute tasks │ ◄───► │ Monitor, memorize & proactive intelligence │

├────────────────────────────────────────┤ ├────────────────────────────────────────────────┤

│ │ │ │

│ ┌──────────────────────────────────┐ │ │ ┌──────────────────────────────────────────┐ │

│ │ 1. RECEIVE USER INPUT │ │ │ │ 1. MONITOR INPUT/OUTPUT │ │

│ │ Parse query, understand │ │ ───► │ │ Observe agent interactions │ │

│ │ context and intent │ │ │ │ Track conversation flow │ │

│ └──────────────────────────────────┘ │ │ └──────────────────────────────────────────┘ │

│ │ │ │ │ │

│ ▼ │ │ ▼ │

│ ┌──────────────────────────────────┐ │ │ ┌──────────────────────────────────────────┐ │

│ │ 2. PLAN & EXECUTE │ │ │ │ 2. MEMORIZE & EXTRACT │ │

│ │ Break down tasks │ │ ◄─── │ │ Store insights, facts, preferences │ │

│ │ Call tools, retrieve data │ │ inject │ │ Extract skills & knowledge │ │

│ │ Generate responses │ │ memory │ │ Update user profile │ │

│ └──────────────────────────────────┘ │ │ └──────────────────────────────────────────┘ │

│ │ │ │ │ │

│ ▼ │ │ ▼ │

│ ┌──────────────────────────────────┐ │ │ ┌──────────────────────────────────────────┐ │

│ │ 3. RESPOND TO USER │ │ │ │ 3. PREDICT USER INTENT │ │

│ │ Deliver answer/result │ │ ───► │ │ Anticipate next steps │ │

│ │ Continue conversation │ │ │ │ Identify upcoming needs │ │

│ └──────────────────────────────────┘ │ │ └──────────────────────────────────────────┘ │

│ │ │ │ │ │

│ ▼ │ │ ▼ │

│ ┌──────────────────────────────────┐ │ │ ┌──────────────────────────────────────────┐ │

│ │ 4. LOOP │ │ │ │ 4. RUN PROACTIVE TASKS │ │

│ │ Wait for next user input │ │ ◄─── │ │ Pre-fetch relevant context │ │

│ │ or proactive suggestions │ │ suggest│ │ Prepare recommendations │ │

│ └──────────────────────────────────┘ │ │ │ Update todolist autonomously │ │

│ │ │ └──────────────────────────────────────────┘ │

└────────────────────────────────────────┘ └────────────────────────────────────────────────┘

│ │

└───────────────────────────┬───────────────────────────────┘

▼

┌──────────────────────────────┐

│ CONTINUOUS SYNC LOOP │

│ Agent ◄──► MemU Bot ◄──► DB │

└──────────────────────────────┘

🎯 Proactive Use Cases

1. Information Recommendation

Agent monitors interests and proactively surfaces relevant content

# User has been researching AI topics

MemU tracks: reading history, saved articles, search queries

# When new content arrives:

Agent: "I found 3 new papers on RAG optimization that align with

your recent research on retrieval systems. One author

(Dr. Chen) you've cited before published yesterday."

# Proactive behaviors:

- Learns topic preferences from browsing patterns

- Tracks author/source credibility preferences

- Filters noise based on engagement history

- Times recommendations for optimal attention

2. Email Management

Agent learns communication patterns and handles routine correspondence

# MemU observes email patterns over time:

- Response templates for common scenarios

- Priority contacts and urgent keywords

- Scheduling preferences and availability

- Writing style and tone variations

# Proactive email assistance:

Agent: "You have 12 new emails. I've drafted responses for 3 routine

requests and flagged 2 urgent items from your priority contacts.

Should I also reschedule tomorrow's meeting based on the

conflict John mentioned?"

# Autonomous actions:

✓ Draft context-aware replies

✓ Categorize and prioritize inbox

✓ Detect scheduling conflicts

✓ Summarize long threads with key decisions

3. Trading & Financial Monitoring

Agent tracks market context and user investment behavior

# MemU learns trading preferences:

- Risk tolerance from historical decisions

- Preferred sectors and asset classes

- Response patterns to market events

- Portfolio rebalancing triggers

# Proactive alerts:

Agent: "NVDA dropped 5% in after-hours trading. Based on your past

behavior, you typically buy tech dips above 3%. Your current

allocation allows for $2,000 additional exposure while

maintaining your 70/30 equity-bond target."

# Continuous monitoring:

- Track price alerts tied to user-defined thresholds

- Correlate news events with portfolio impact

- Learn from executed vs. ignored recommendations

- Anticipate tax-loss harvesting opportunities

...

🗂️ Hierarchical Memory Architecture

MemU's three-layer system enables both reactive queries and proactive context loading:

| Layer | Reactive Use | Proactive Use |

|---|---|---|

| Resource | Direct access to original data | Background monitoring for new patterns |

| Item | Targeted fact retrieval | Real-time extraction from ongoing interactions |

| Category | Summary-level overview | Automatic context assembly for anticipation |

Proactive Benefits:

- Auto-categorization: New memories self-organize into topics

- Pattern Detection: System identifies recurring themes

- Context Prediction: Anticipates what information will be needed next

🚀 Quick Start

Option 1: Cloud Version

Experience proactive memory instantly:

👉 memu.so - Hosted service with 7×24 continuous learning

For enterprise deployment with custom proactive workflows, contact info@nevamind.ai

Cloud API (v3)

| Base URL | https://api.memu.so |

|---|---|

| Auth | Authorization: Bearer YOUR_API_KEY |

| Method | Endpoint | Description |

|---|---|---|

POST |

/api/v3/memory/memorize |

Register continuous learning task |

GET |

/api/v3/memory/memorize/status/{task_id} |

Check real-time processing status |

POST |

/api/v3/memory/categories |

List auto-generated categories |

POST |

/api/v3/memory/retrieve |

Query memory (supports proactive context loading) |

Option 2: Self-Hosted

Installation

pip install -e .

Basic Example

Requirements: Python 3.13+ and an OpenAI API key

Test Continuous Learning (in-memory):

export OPENAI_API_KEY=your_api_key

cd tests

python test_inmemory.py

Test with Persistent Storage (PostgreSQL):

# Start PostgreSQL with pgvector

docker run -d \

--name memu-postgres \

-e POSTGRES_USER=postgres \

-e POSTGRES_PASSWORD=postgres \

-e POSTGRES_DB=memu \

-p 5432:5432 \

pgvector/pgvector:pg16

# Run continuous learning test

export OPENAI_API_KEY=your_api_key

cd tests

python test_postgres.py

Both examples demonstrate proactive memory workflows:

- Continuous Ingestion: Process multiple files sequentially

- Auto-Extraction: Immediate memory creation

- Proactive Retrieval: Context-aware memory surfacing

See tests/test_inmemory.py and tests/test_postgres.py for implementation details.

Custom LLM and Embedding Providers

MemU supports custom LLM and embedding providers beyond OpenAI. Configure them via llm_profiles:

from memu import MemUService

service = MemUService(

llm_profiles={

# Default profile for LLM operations

"default": {

"base_url": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"api_key": "your_api_key",

"chat_model": "qwen3-max",

"client_backend": "sdk" # "sdk" or "http"

},

# Separate profile for embeddings

"embedding": {

"base_url": "https://api.voyageai.com/v1",

"api_key": "your_voyage_api_key",

"embed_model": "voyage-3.5-lite"

}

},

# ... other configuration

)

OpenRouter Integration

MemU supports OpenRouter as a model provider, giving you access to multiple LLM providers through a single API.

Configuration

from memu import MemoryService

service = MemoryService(

llm_profiles={

"default": {

"provider": "openrouter",

"client_backend": "httpx",

"base_url": "https://openrouter.ai",

"api_key": "your_openrouter_api_key",

"chat_model": "anthropic/claude-3.5-sonnet", # Any OpenRouter model

"embed_model": "openai/text-embedding-3-small", # Embedding model

},

},

database_config={

"metadata_store": {"provider": "inmemory"},

},

)

Environment Variables

| Variable | Description |

|---|---|

OPENROUTER_API_KEY |

Your OpenRouter API key from openrouter.ai/keys |

Supported Features

| Feature | Status | Notes |

|---|---|---|

| Chat Completions | Supported | Works with any OpenRouter chat model |

| Embeddings | Supported | Use OpenAI embedding models via OpenRouter |

| Vision | Supported | Use vision-capable models (e.g., openai/gpt-4o) |

Running OpenRouter Tests

export OPENROUTER_API_KEY=your_api_key

# Full workflow test (memorize + retrieve)

python tests/test_openrouter.py

# Embedding-specific tests

python tests/test_openrouter_embedding.py

# Vision-specific tests

python tests/test_openrouter_vision.py

See examples/example_4_openrouter_memory.py for a complete working example.

📖 Core APIs

memorize() - Continuous Learning Pipeline

Processes inputs in real-time and immediately updates memory:

result = await service.memorize(

resource_url="path/to/file.json", # File path or URL

modality="conversation", # conversation | document | image | video | audio

user={"user_id": "123"} # Optional: scope to a user

)

# Returns immediately with extracted memory:

{

"resource": {...}, # Stored resource metadata

"items": [...], # Extracted memory items (available instantly)

"categories": [...] # Auto-updated category structure

}

Proactive Features:

- Zero-delay processing—memories available immediately

- Automatic categorization without manual tagging

- Cross-reference with existing memories for pattern detection

retrieve() - Dual-Mode Intelligence

MemU supports both proactive context loading and reactive querying:

RAG-based Retrieval (method="rag")

Fast proactive context assembly using embeddings:

- ✅ Instant context: Sub-second memory surfacing

- ✅ Background monitoring: Can run continuously without LLM costs

- ✅ Similarity scoring: Identifies most relevant memories automatically

LLM-based Retrieval (method="llm")

Deep anticipatory reasoning for complex contexts:

- ✅ Intent prediction: LLM infers what user needs before they ask

- ✅ Query evolution: Automatically refines search as context develops

- ✅ Early termination: Stops when sufficient context is gathered

Comparison

| Aspect | RAG (Fast Context) | LLM (Deep Reasoning) |

|---|---|---|

| Speed | ⚡ Milliseconds | 🐢 Seconds |

| Cost | 💰 Embedding only | 💰💰 LLM inference |

| Proactive use | Continuous monitoring | Triggered context loading |

| Best for | Real-time suggestions | Complex anticipation |

Usage

# Proactive retrieval with context history

result = await service.retrieve(

queries=[

{"role": "user", "content": {"text": "What are their preferences?"}},

{"role": "user", "content": {"text": "Tell me about work habits"}}

],

where={"user_id": "123"}, # Optional: scope filter

method="rag" # or "llm" for deeper reasoning

)

# Returns context-aware results:

{

"categories": [...], # Relevant topic areas (auto-prioritized)

"items": [...], # Specific memory facts

"resources": [...], # Original sources for traceability

"next_step_query": "..." # Predicted follow-up context

}

Proactive Filtering: Use where to scope continuous monitoring:

where={"user_id": "123"}- User-specific contextwhere={"agent_id__in": ["1", "2"]}- Multi-agent coordination- Omit

wherefor global context awareness

💡 Proactive Scenarios

Example 1: Always-Learning Assistant

Continuously learns from every interaction without explicit memory commands:

export OPENAI_API_KEY=your_api_key

python examples/example_1_conversation_memory.py

Proactive Behavior:

- Automatically extracts preferences from casual mentions

- Builds relationship models from interaction patterns

- Surfaces relevant context in future conversations

- Adapts communication style based on learned preferences

Best for: Personal AI assistants, customer support that remembers, social chatbots

Example 2: Self-Improving Agent

Learns from execution logs and proactively suggests optimizations:

export OPENAI_API_KEY=your_api_key

python examples/example_2_skill_extraction.py

Proactive Behavior:

- Monitors agent actions and outcomes continuously

- Identifies patterns in successes and failures

- Auto-generates skill guides from experience

- Proactively suggests strategies for similar future tasks

Best for: DevOps automation, agent self-improvement, knowledge capture

Example 3: Multimodal Context Builder

Unifies memory across different input types for comprehensive context:

export OPENAI_API_KEY=your_api_key

python examples/example_3_multimodal_memory.py

Proactive Behavior:

- Cross-references text, images, and documents automatically

- Builds unified understanding across modalities

- Surfaces visual context when discussing related topics

- Anticipates information needs by combining multiple sources

Best for: Documentation systems, learning platforms, research assistants

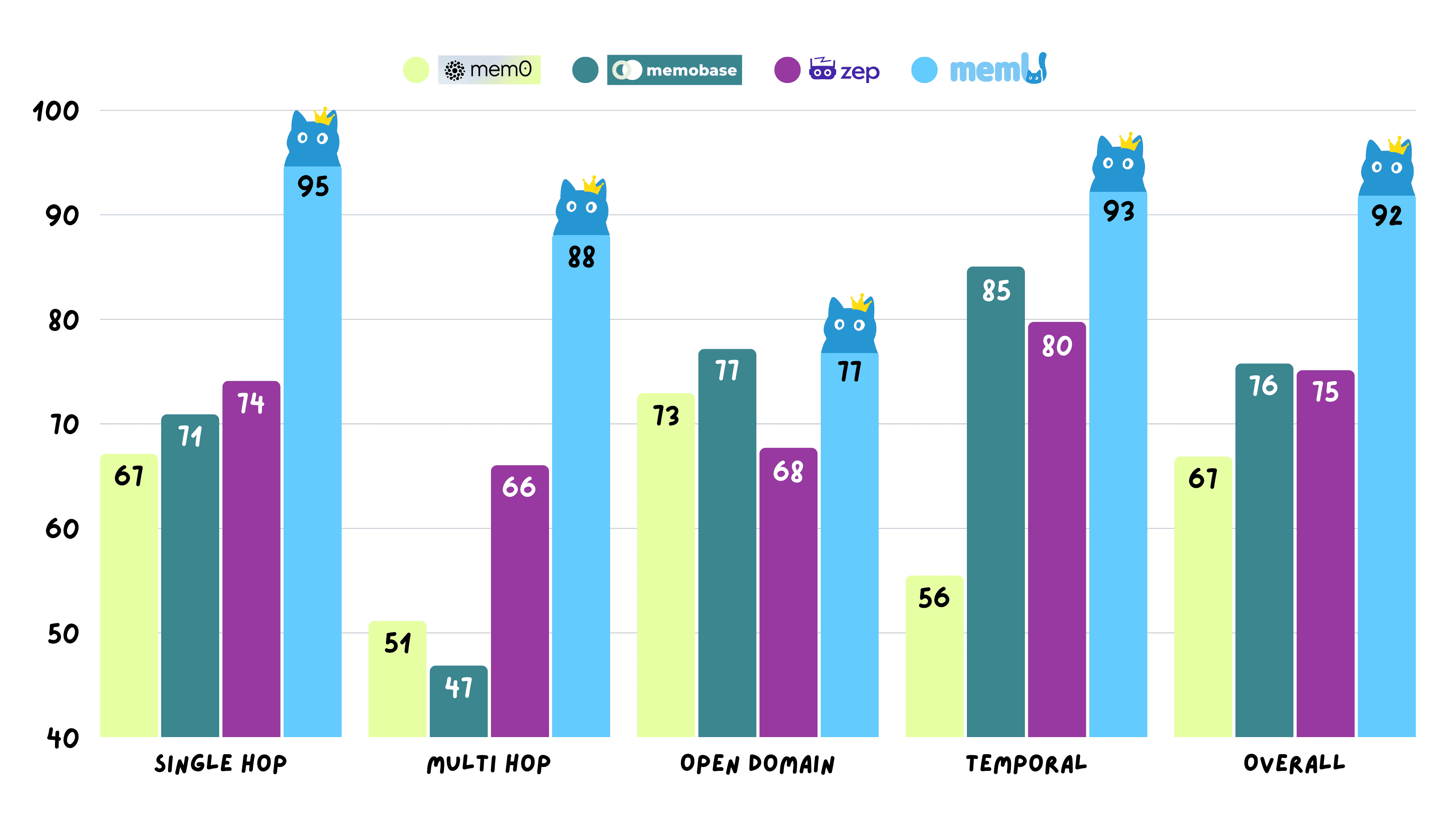

📊 Performance

MemU achieves 92.09% average accuracy on the Locomo benchmark across all reasoning tasks, demonstrating reliable proactive memory operations.

View detailed experimental data: memU-experiment

🧩 Ecosystem

| Repository | Description | Proactive Features |

|---|---|---|

| memU | Core proactive memory engine | 7×24 learning pipeline, auto-categorization |

| memU-server | Backend with continuous sync | Real-time memory updates, webhook triggers |

| memU-ui | Visual memory dashboard | Live memory evolution monitoring |

Quick Links:

🤝 Partners

🤝 How to Contribute

We welcome contributions from the community! Whether you're fixing bugs, adding features, or improving documentation, your help is appreciated.

Getting Started

To start contributing to MemU, you'll need to set up your development environment:

Prerequisites

- Python 3.13+

- uv (Python package manager)

- Git

Setup Development Environment

# 1. Fork and clone the repository

git clone https://github.com/YOUR_USERNAME/memU.git

cd memU

# 2. Install development dependencies

make install

The make install command will:

- Create a virtual environment using

uv - Install all project dependencies

- Set up pre-commit hooks for code quality checks

Running Quality Checks

Before submitting your contribution, ensure your code passes all quality checks:

make check

The make check command runs:

- Lock file verification: Ensures

pyproject.tomlconsistency - Pre-commit hooks: Lints code with Ruff, formats with Black

- Type checking: Runs

mypyfor static type analysis - Dependency analysis: Uses

deptryto find obsolete dependencies

Contributing Guidelines

For detailed contribution guidelines, code standards, and development practices, please see CONTRIBUTING.md.

Quick tips:

- Create a new branch for each feature or bug fix

- Write clear commit messages

- Add tests for new functionality

- Update documentation as needed

- Run

make checkbefore pushing

📄 License

🌍 Community

- GitHub Issues: Report bugs & request features

- Discord: Join the community

- X (Twitter): Follow @memU_ai

- Contact: info@nevamind.ai

⭐ Star us on GitHub to get notified about new releases!

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file memu_py-1.5.1.tar.gz.

File metadata

- Download URL: memu_py-1.5.1.tar.gz

- Upload date:

- Size: 12.5 MB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

476789f33003a19b6f5d2883ddbae0a4f1d2f3c9e52ecff445e26620473d0f48

|

|

| MD5 |

ed2e6b657177c1a9e078ab662fcf3089

|

|

| BLAKE2b-256 |

9795e46407b2c83d7bb605340fc0a86f9b28ccf2d8e66270d40aa18ae0aff8e9

|

Provenance

The following attestation bundles were made for memu_py-1.5.1.tar.gz:

Publisher:

release-please.yml on NevaMind-AI/memU

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

memu_py-1.5.1.tar.gz -

Subject digest:

476789f33003a19b6f5d2883ddbae0a4f1d2f3c9e52ecff445e26620473d0f48 - Sigstore transparency entry: 1157765230

- Sigstore integration time:

-

Permalink:

NevaMind-AI/memU@357aefc8012705bfde723f141d52f675fe712bed -

Branch / Tag:

refs/heads/main - Owner: https://github.com/NevaMind-AI

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release-please.yml@357aefc8012705bfde723f141d52f675fe712bed -

Trigger Event:

push

-

Statement type:

File details

Details for the file memu_py-1.5.1-cp313-abi3-win_amd64.whl.

File metadata

- Download URL: memu_py-1.5.1-cp313-abi3-win_amd64.whl

- Upload date:

- Size: 272.5 kB

- Tags: CPython 3.13+, Windows x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

980613a3758c453436ac71a6f5ed607236355aa2d04eb8a9bb878cf176a98c17

|

|

| MD5 |

e632efff3025bf5cf4e029beeb4bf1a3

|

|

| BLAKE2b-256 |

e25b9418f93faffdb1707f89f4741919df4add6d6c5500fa295cb670d30260c1

|

Provenance

The following attestation bundles were made for memu_py-1.5.1-cp313-abi3-win_amd64.whl:

Publisher:

release-please.yml on NevaMind-AI/memU

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

memu_py-1.5.1-cp313-abi3-win_amd64.whl -

Subject digest:

980613a3758c453436ac71a6f5ed607236355aa2d04eb8a9bb878cf176a98c17 - Sigstore transparency entry: 1157765310

- Sigstore integration time:

-

Permalink:

NevaMind-AI/memU@357aefc8012705bfde723f141d52f675fe712bed -

Branch / Tag:

refs/heads/main - Owner: https://github.com/NevaMind-AI

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release-please.yml@357aefc8012705bfde723f141d52f675fe712bed -

Trigger Event:

push

-

Statement type:

File details

Details for the file memu_py-1.5.1-cp313-abi3-manylinux_2_39_x86_64.whl.

File metadata

- Download URL: memu_py-1.5.1-cp313-abi3-manylinux_2_39_x86_64.whl

- Upload date:

- Size: 408.7 kB

- Tags: CPython 3.13+, manylinux: glibc 2.39+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

14b091e7e26e5c61a6f366cc120cf8cbeaed38f8af84f74cd2c9f986b43d69c2

|

|

| MD5 |

6acfef774b4c2e2e3f0e122848a57bff

|

|

| BLAKE2b-256 |

fdcfa3de0da0f3467a2c8449b96f758fdf8911a97a4490f6546cd81f6ead3d20

|

Provenance

The following attestation bundles were made for memu_py-1.5.1-cp313-abi3-manylinux_2_39_x86_64.whl:

Publisher:

release-please.yml on NevaMind-AI/memU

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

memu_py-1.5.1-cp313-abi3-manylinux_2_39_x86_64.whl -

Subject digest:

14b091e7e26e5c61a6f366cc120cf8cbeaed38f8af84f74cd2c9f986b43d69c2 - Sigstore transparency entry: 1157765377

- Sigstore integration time:

-

Permalink:

NevaMind-AI/memU@357aefc8012705bfde723f141d52f675fe712bed -

Branch / Tag:

refs/heads/main - Owner: https://github.com/NevaMind-AI

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release-please.yml@357aefc8012705bfde723f141d52f675fe712bed -

Trigger Event:

push

-

Statement type:

File details

Details for the file memu_py-1.5.1-cp313-abi3-manylinux_2_39_aarch64.whl.

File metadata

- Download URL: memu_py-1.5.1-cp313-abi3-manylinux_2_39_aarch64.whl

- Upload date:

- Size: 400.9 kB

- Tags: CPython 3.13+, manylinux: glibc 2.39+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f14ff39c47ecb3113c62ea9b498968d0bfbdd32467b1a1309a04237b22f96ac5

|

|

| MD5 |

19fbc954e710c8af4ab0f9722cc072c2

|

|

| BLAKE2b-256 |

87a249753e1fa7cea4143a4494de7d6f9c8b25c9df4c110942c60cf57a92da63

|

Provenance

The following attestation bundles were made for memu_py-1.5.1-cp313-abi3-manylinux_2_39_aarch64.whl:

Publisher:

release-please.yml on NevaMind-AI/memU

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

memu_py-1.5.1-cp313-abi3-manylinux_2_39_aarch64.whl -

Subject digest:

f14ff39c47ecb3113c62ea9b498968d0bfbdd32467b1a1309a04237b22f96ac5 - Sigstore transparency entry: 1157765526

- Sigstore integration time:

-

Permalink:

NevaMind-AI/memU@357aefc8012705bfde723f141d52f675fe712bed -

Branch / Tag:

refs/heads/main - Owner: https://github.com/NevaMind-AI

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release-please.yml@357aefc8012705bfde723f141d52f675fe712bed -

Trigger Event:

push

-

Statement type:

File details

Details for the file memu_py-1.5.1-cp313-abi3-macosx_11_0_arm64.whl.

File metadata

- Download URL: memu_py-1.5.1-cp313-abi3-macosx_11_0_arm64.whl

- Upload date:

- Size: 375.8 kB

- Tags: CPython 3.13+, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

bb123aebc509e670449ff48bdd16ce71adfe620f9e6a0aed8b94f05d0d921f27

|

|

| MD5 |

ec466022a7ad5df2a1c14883c9fab39d

|

|

| BLAKE2b-256 |

a706c5af830fa14eece0105e0aa915e9c6d5b54820121bab72215c32ba812b5b

|

Provenance

The following attestation bundles were made for memu_py-1.5.1-cp313-abi3-macosx_11_0_arm64.whl:

Publisher:

release-please.yml on NevaMind-AI/memU

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

memu_py-1.5.1-cp313-abi3-macosx_11_0_arm64.whl -

Subject digest:

bb123aebc509e670449ff48bdd16ce71adfe620f9e6a0aed8b94f05d0d921f27 - Sigstore transparency entry: 1157765441

- Sigstore integration time:

-

Permalink:

NevaMind-AI/memU@357aefc8012705bfde723f141d52f675fe712bed -

Branch / Tag:

refs/heads/main - Owner: https://github.com/NevaMind-AI

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release-please.yml@357aefc8012705bfde723f141d52f675fe712bed -

Trigger Event:

push

-

Statement type:

File details

Details for the file memu_py-1.5.1-cp313-abi3-macosx_10_12_x86_64.whl.

File metadata

- Download URL: memu_py-1.5.1-cp313-abi3-macosx_10_12_x86_64.whl

- Upload date:

- Size: 378.3 kB

- Tags: CPython 3.13+, macOS 10.12+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

24d4a71650997267ad6f00a42cabdde4474e21ad04809cedd66ae5ed6c9f2784

|

|

| MD5 |

eac0be6ee0357a8909247a10be675ebb

|

|

| BLAKE2b-256 |

f171b9d6409304f2455f1fdd2b8fcaef5419b1890ee802a1bd5b68e8b6d43bd2

|

Provenance

The following attestation bundles were made for memu_py-1.5.1-cp313-abi3-macosx_10_12_x86_64.whl:

Publisher:

release-please.yml on NevaMind-AI/memU

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

memu_py-1.5.1-cp313-abi3-macosx_10_12_x86_64.whl -

Subject digest:

24d4a71650997267ad6f00a42cabdde4474e21ad04809cedd66ae5ed6c9f2784 - Sigstore transparency entry: 1157765659

- Sigstore integration time:

-

Permalink:

NevaMind-AI/memU@357aefc8012705bfde723f141d52f675fe712bed -

Branch / Tag:

refs/heads/main - Owner: https://github.com/NevaMind-AI

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release-please.yml@357aefc8012705bfde723f141d52f675fe712bed -

Trigger Event:

push

-

Statement type: