Spegel - Reflect the web through AI. A terminal browser with multiple AI-powered views.

Project description

Spegel - Reflect the web through AI

Automatically rewrites the websites into markdown optimised for viewing in the terminal. Read intro blog post here

This is a proof-of-concept, bugs are to be expected but feel free to raise an issue or pull request.

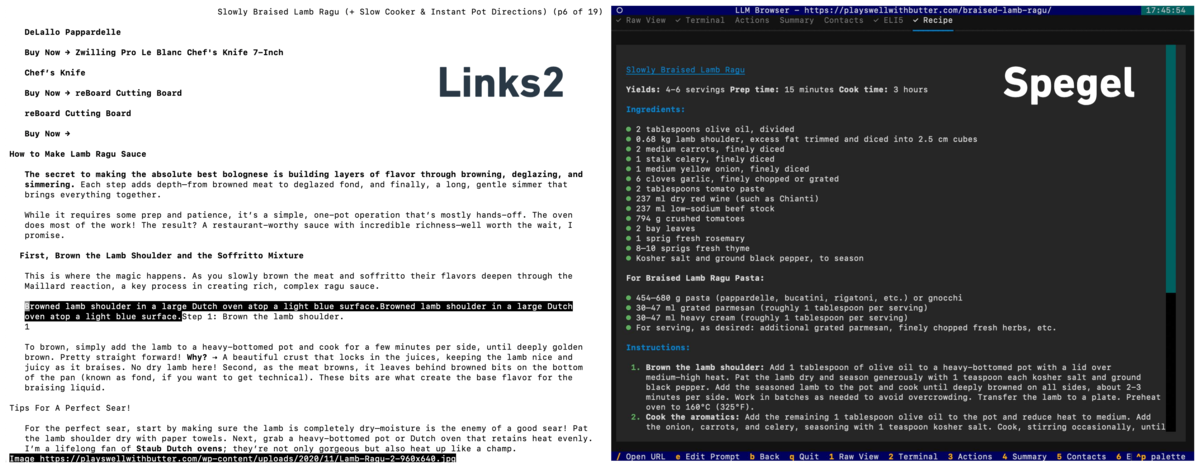

Screenshot

Sometimes you don't want to read through someone's life story just to get to a recipe

Installation

Requires Python 3.11+

$ pip install spegel

or clone the repo and install it in editable mode

# Clone and enter the directory

$ git clone https://github.com/simedw/spegel.git

$ cd spegel

# Install dependencies and the CLI

$ pip install -e .

API Keys

Spegel is using litellm, which allows the use of the common LLMs, both local and external.

By default Gemini 2.5 Flash Lite is used, which requires you to set the GEMINI_API_KEY, see env_example.txt

Usage

Launch the browser

spegel # Start with welcome screen

spegel bbc.com # Open a URL immediately

Or, equivalently:

python -m spegel # Start with welcome screen

python -m spegel bbc.com

Basic controls

/– Open URL inputTab/Shift+Tab– Cycle linksEnter– Open selected linke– Edit LLM prompt for current viewb– Go backq– Quit

Editing settings

Spegel loads settings from a TOML config file. You can customize views, prompts, and UI options.

Config file search order:

./.spegel.toml(current directory)~/.spegel.toml~/.config/spegel/config.toml

To edit settings:

- Copy the example config:

cp example_config.toml .spegel.toml # or create ~/.spegel.toml

- Edit

.spegel.tomlin your favorite editor.

Example snippet:

[settings]

default_view = "terminal"

app_title = "Spegel"

[ai]

default_model="gpt-4.1-nano"

[[views]]

id = "raw"

name = "Raw View"

hotkey = "1"

order = "1"

prompt = ""

[[views]]

id = "terminal"

name = "Terminal"

hotkey = "2"

order = "2"

prompt = "Transform this webpage into the perfect terminal browsing experience! ..."

model="claude-3-5-haiku-20241022"

Local Models with Ollama

To run with a local model using Ollama, first pull and serve your desired model:

$ ollama pull llama2

$ ollama serve

Then set the model in .spegel.toml as follows:

model = "ollama/llama2"

Ollama supports models like Llama, Mistral, and many others.

License

MIT License - see LICENSE file for details.

For more, see the code or open an issue!

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file spegel-0.1.3.tar.gz.

File metadata

- Download URL: spegel-0.1.3.tar.gz

- Upload date:

- Size: 130.8 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.12.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

976747c7a302723b89fd1de27cc9ae4168a609615c5da13d3ccfa68ff0b379f9

|

|

| MD5 |

9e03015531c279e67a04f67fc30167b8

|

|

| BLAKE2b-256 |

42398682d58898e93f2e14ade2439e400bd1b38c8f61d686af7a05d60cf4f7ab

|

Provenance

The following attestation bundles were made for spegel-0.1.3.tar.gz:

Publisher:

publish.yml on simedw/spegel

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

spegel-0.1.3.tar.gz -

Subject digest:

976747c7a302723b89fd1de27cc9ae4168a609615c5da13d3ccfa68ff0b379f9 - Sigstore transparency entry: 273471752

- Sigstore integration time:

-

Permalink:

simedw/spegel@27727f0edd73a54e9d03573601fdcb17f8a5ea0a -

Branch / Tag:

refs/tags/v0.1.3 - Owner: https://github.com/simedw

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@27727f0edd73a54e9d03573601fdcb17f8a5ea0a -

Trigger Event:

release

-

Statement type:

File details

Details for the file spegel-0.1.3-py3-none-any.whl.

File metadata

- Download URL: spegel-0.1.3-py3-none-any.whl

- Upload date:

- Size: 22.5 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.12.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f8aaf1bff71a1e55a2e6b79399e0b538d9da1294d200047bce8ce50e4ea76067

|

|

| MD5 |

3d5aa7d0d0aa492451904389e60cac81

|

|

| BLAKE2b-256 |

eea89b3b6ea49bb276fa79f72de83c960b328dd60f8b59168ebdc506c967cf1c

|

Provenance

The following attestation bundles were made for spegel-0.1.3-py3-none-any.whl:

Publisher:

publish.yml on simedw/spegel

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

spegel-0.1.3-py3-none-any.whl -

Subject digest:

f8aaf1bff71a1e55a2e6b79399e0b538d9da1294d200047bce8ce50e4ea76067 - Sigstore transparency entry: 273471755

- Sigstore integration time:

-

Permalink:

simedw/spegel@27727f0edd73a54e9d03573601fdcb17f8a5ea0a -

Branch / Tag:

refs/tags/v0.1.3 - Owner: https://github.com/simedw

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@27727f0edd73a54e9d03573601fdcb17f8a5ea0a -

Trigger Event:

release

-

Statement type: